Last week we have rolled out some updates to our face recognition algorithm. First of all the demo on our website was updated with a recognition page.

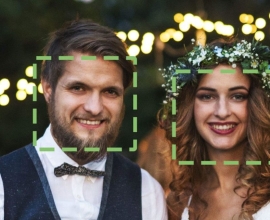

Here you can pick two photos from a set of provided ones or use your own images to see recognition results between them. By default two most similar faces from the photos are selected, but you can click or tap around to see one face (with dotted rectangle) similarity score with all others.

Faces/group improvements

The demo uses new functionality we added to faces/group method – a similarity matrix. The method now takes optional return_similarities parameter, and if it has a value of true then for each detected face tag, within the response, we return similarity scores with all other tags in all photos in the request (except for the zero scores as we want to keep the response size reasonable small). With this new functionality you now have 3 options to group people in the photos: fully automatic; automatic with specifying your own threshold value for grouping (instead of default value of 70) and manual by examining the similarity matrix and adding you own logic on top for greatest flexibility. The third option also allows image verification scenario, when you need an answer to a question like “How similar are two persons in these two photos?”.

Faces/group works great when there are small finite set of photos to compare. But if you need to compare a person in a photo with a database of two million people or group people in several hundreds or thousands of photos then faces/recognize is your choice.

The recognition workflow

Recognition scenario has a slightly more complicated workflow. People have to be enrolled to a database first: their faces have to be saved by the means of tags/save method and the user have to be prepared for recognition using faces/train method. Additional face impressions can be added or some of them can be removed in the future using tags/save or tags/remove methods and faces/train have to be re-run on the user to update its information for recognition. Note that the more impressions of the user you add (with different head rotation, expression, lightning conditions, with and without glasses, beard, etc.), the more likely it will be reliably recognized later. Also note that if you want to remove a user from the database you have to remove all user tags from the system (call tags/remove with tag list obtained from tags/get for the user) and call faces/train for the same user to remove its information from the recognition system.

Recognition results for each tag in response from faces/recognize method contain list of value pairs: a user id it was matched with and a confidence value of the match (similarity score). You should compare the confidence with a threshold value of the tag to decide if the match is reliable or not. The threshold value depends on quality of the face itself and also the database size it was matched against.

That is the second improvement we have rolled out – dynamic tag threshold value along with more natural (for a human) matching similarity score normalization. You may notice that the confidence values you receive in the response now feel (and are) more accurate.

Because faces/recognize (and faces/group if requested) can be used to match a person against very large databases that can potentially yield a lot of matched users to be returned. You can now use limit parameter to return the specified maximum number of top matching results. If not specified a value of 100 is used.

The meaning of label

We see from the support requests that there is a bit of misunderstanding about label field value in the response. The thing is that the label returned with a tag in the results is the same label (if) used during tags/save or tags/add for the same tag for the same photo. It is not a recognition result, the same label faces/detect may return for the same tag in the same photo. It is saved for the tag, not for the user. If you need to store some additional information for a user and retrieve it along with recognition results, you have to store it in information system of yours and pull it from there after obtaining recognized user ids.

Make sure to check out what else is possible with SkyBiometry API.